Introduction

At Codigocode, we are working on deep legacy modernization using architectures built around advanced large language models (LLMs), specialized autonomous agents, Retrieval-Augmented Generation (RAG), and multi-stage reasoning orchestration.

We are not simply integrating AI features. We are building infrastructures where AI actively participates in analysis, refactoring, and architectural evolution. In that context, a fundamental question emerges:

If AI becomes infrastructure, how do we measure its real computational capacity?

Tokens and prompts are not enough. We need technical, comparable, and governable metrics that reflect real processing, active context, and computational complexity. This is where the concept of ACU (AI Compute Unit) is born.

The Problem: Tokens Are Not a Complete Metric

In most AI pricing models, consumption is estimated as:

\[ Cost = (T_{in} + T_{out}) \times TokenPrice \]

Where:

- \(T_{in}\) = input tokens

- \(T_{out}\) = output tokens

This works for simple prompt-response interactions. However, in production AI systems that rely on deep agents, RAG pipelines, vector database retrieval, context persistence, and multi-step reasoning, this linear model breaks down.

A more accurate representation of effective computational cost is:

\[ C = \alpha T_{in} + \beta T_{out} + \gamma C_{ctx} + \delta E + \epsilon R \]

Where:

- \(C_{ctx}\) = active context window size

- \(E\) = embedding and retrieval processing (RAG overhead)

- \(R\) = reasoning depth (multi-step internal execution)

- \(\alpha, \beta, \gamma, \delta, \epsilon\) = model-dependent weighting coefficients

Measuring only raw tokens hides these variables—especially in real software modernization workflows.

Formal Definition of ACU

We propose a normalized unit to capture effective AI capacity:

\[ ACU = \frac{\alpha T_{in} + \beta T_{out} + \gamma C_{ctx} + \delta E + \epsilon R}{1000} \]

Operationally, we simplify this as:

\[ 1 \ ACU \approx 1000 \ normalized\ effective\ tokens \]

An ACU does not represent 1000 raw tokens. It represents 1000 tokens weighted by real computational complexity. This allows AI capacity to be treated as an engineering unit—not just a billing abstraction.

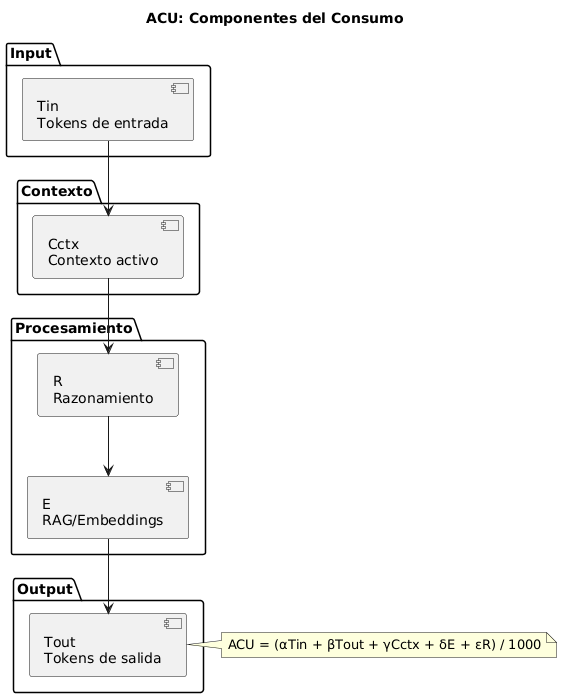

Conceptual Model

The ACU can be understood as a multidimensional function:

\[ ACU = f(Input, Output, Context, Processing) \]

The conceptual map illustrates how input tokens interact with active context and processing layers—reasoning and retrieval— before producing an output, and how total computational effort results in measurable ACUs.

ACU in Real AI-Driven Software Modernization

In AI-powered modernization workflows such as:

- .NET Framework → .NET 8 migration

- Legacy PHP → modern Node.js/NestJS transformation

- WebForms → React architecture evolution

- Large-scale repository analysis (>50MB)

A typical transformation cycle may involve:

- 8,000 input tokens

- 3,000 output tokens

- 4,000 active context tokens

- RAG embedding operations

Using a simplified weighting:

\[ ACU \approx \frac{8000 + 3000 + 0.5(4000)}{1000} \]

\[ ACU \approx 13 \]

This enables:

- Module-level AI capacity estimation

- Multi-tenant AI governance

- Enterprise workload projection

- Intelligent model orchestration

Economic vs Premium AI Models

One of the strengths of ACU is model abstraction.

Suppose:

\[ Cost_{economic} = 5 \ USD / million\ tokens \]

\[ Cost_{premium} = 45 \ USD / million\ tokens \]

Then cost per ACU becomes:

\[ Cost_{ACU} = \frac{1000}{1{,}000{,}000} \times Price_{per\ million} \]

Economic model:

\[ Cost_{ACU} = 0.005 \ USD \]

Premium model:

\[ Cost_{ACU} = 0.045 \ USD \]

ACU enables hybrid AI architecture design, dynamic model routing, cost-performance balancing, and predictable enterprise AI scaling—while keeping a single measurable unit across different model tiers.

From Black Box AI to Governable Infrastructure

When AI is a simple tool, tokens may be sufficient. When AI becomes infrastructure:

- It needs assignable capacity.

- It needs tenant-level governance.

- It needs predictability.

- It needs standardized metrics.

ACU proposes a framework to measure AI capacity with the same engineering discipline we apply to CPU, memory, and bandwidth.

It is not a marketing metric. It is an engineering unit.

Conclusion

Artificial intelligence applied to software modernization is inherently multidimensional. Input, output, context, embeddings, and reasoning depth interact in non-linear ways.

If AI is infrastructure, it must be measurable.

ACU (AI Compute Unit) is a proposal to measure effective AI capacity in real-world modernization environments. It is a step toward governable, comparable, and scalable AI systems.